I’ve been doing a lot of experimenting with neural-style the last month. I think I’ve discovered a few exciting applications of the technique that I haven’t seen anyone else do yet. The true power of this algorithm really shines when you can see concrete examples.

Skip to the Applications part of this post to see the outputs from my experimentation if you are already familiar with DeepDream, Deep Style, and all the other latest happenings in generating images with deep neural networks.

Background and History

On May 18, 2015 at 2 a.m., Alexander Mordvintsev, an engineer at Google, did something with deep neural networks that no one had done before. He took a net designed for recognizing objects in images and used it to generate objects in images. In a sense, he was telling these systems that mimic the human visual cortex to hallucinate things that weren’t really there. The results looked remarkably like LSD trips or what a schizophrenic person sees on a blank wall.

Mordvintsev’s discovery quickly gathered attention at Google once he posted images from his experimentation on the company’s internal network. On June 17, 2015, Google posted a blog post about the technique (dubbed “Inceptionism”) and how it was useful for opening up the notoriously black-boxed neural networks using visualizations that researchers could examine. These machine hallucinations were key for identifying the features of objects that neural networks used to tell one object from another (like a dog from a cat). But the post also revealed the beautiful results of applying the algorithm iteratively on it’s own outputs and zooming out at each step.

The internet exploded in response to this post. And once Google posted the code for performing the technique, people began experimenting and sharing their fantastic and creepy images with the world.

Then, on August, 26, 2015, a paper titled “A Neural Algorithm of Artistic Style” was published. It showed how one could identify which layers of deep neural networks recognized stylistic information of an image (and not the content) and then use this stylistic information in Google’s Inceptionism technique to paint other images in the style of any artist. A few implementations of the paper were put up on Github. This exploded the internet again in a frenzy. This time, the images produced were less like psychedelic-induced nightmares but more like the next generation of Instagram filters (reddit how-to).

People began to wonder what all of this meant to the future of art. Some of the results produced where indistinguishable from the style of dead artists’ works. Was this a demonstration of creativity in computers or just a neat trick?

On November, 19, 2015, another paper was released that demonstrated a technique for generating scenes from convolutional neural nets (implementation on Github). The program could generate random (and very realistic) bedroom images from a neural net trained on bedroom images. Amazingly, it could also generate the same bedroom from any angle. It could also produce images of the same procedurally generated face from any angle. Theoretically, we could use this technology to create procedurally generated game art.

The main thing holding this technology back from revolutionizing procedurally generated video games is that it is not real-time. Using neural-style to apply artistic style to a 512 by 512 pixel content image could take minutes even on the top-of-the-line GTX Titan X graphics card. Still, I believe this technology has a lot of potential for generating game art even if it can’t act as a real-time filter.

Applications: Generating Satellite Images for Procedural World Maps

I personally know very little machine learning, but I have been able to produce a lot of interesting results by using the tool provided by neural-style.

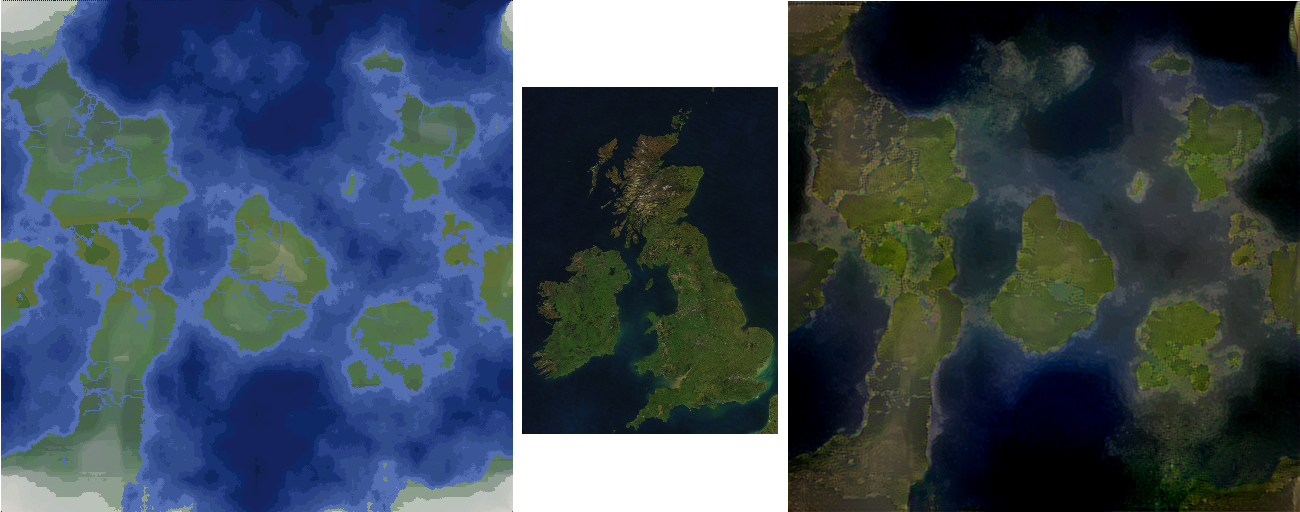

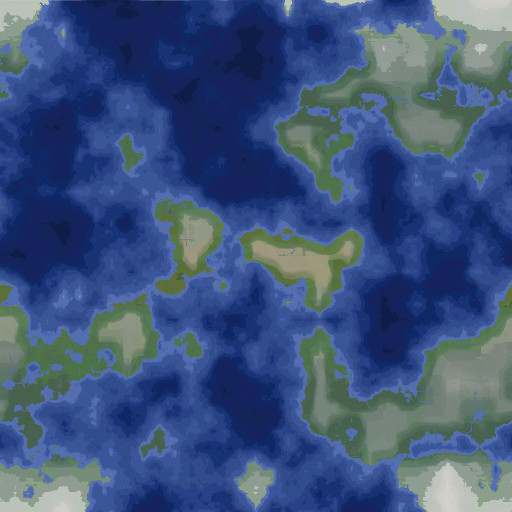

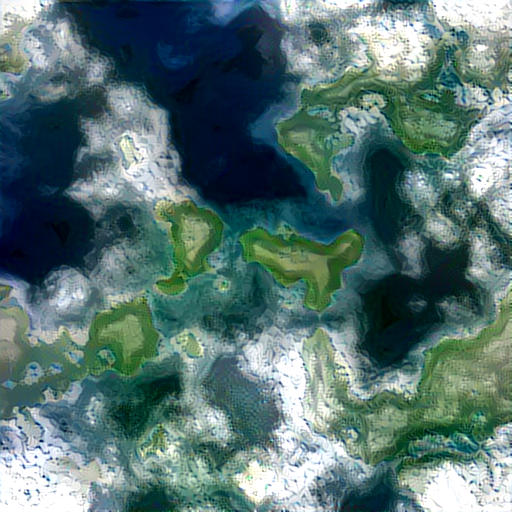

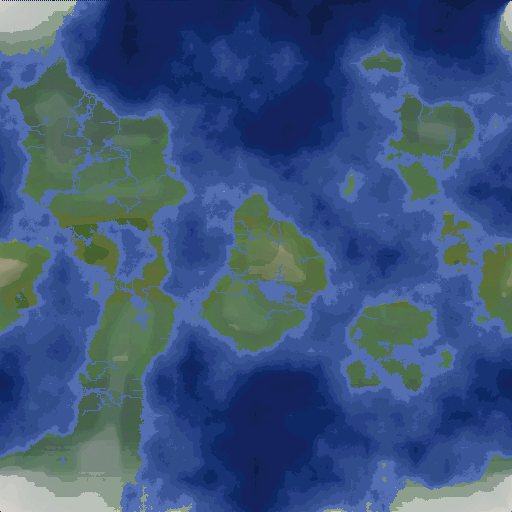

Inspired by Kaelan’s procedurally generated world maps, I wanted to extend the idea by generating realistic satellite images of the terrain maps. The procedure is simple: take a generated terrain map and apply the style of a real-world satellite image on it using neural-style.

The generated output takes on whatever terrain is in the satellite image. Here is an output processing one of Kaelan’s maps with a arctic satellite image:

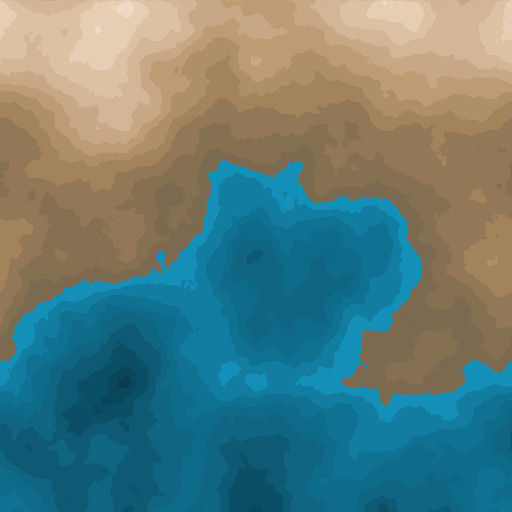

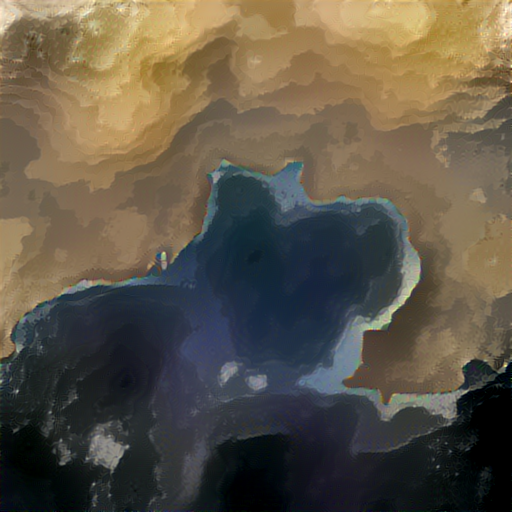

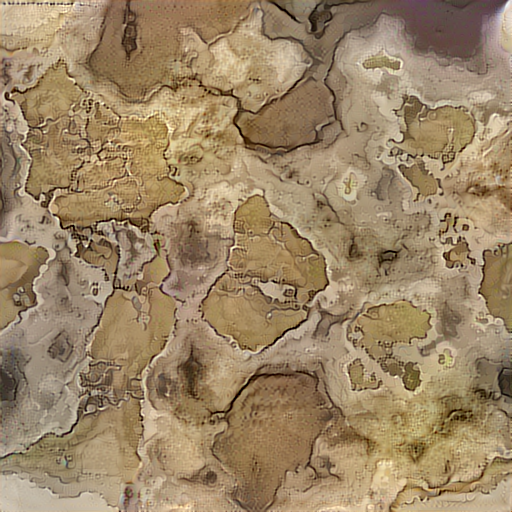

And again, with one of Kaelan’s desert maps and a satellite image of a desert:

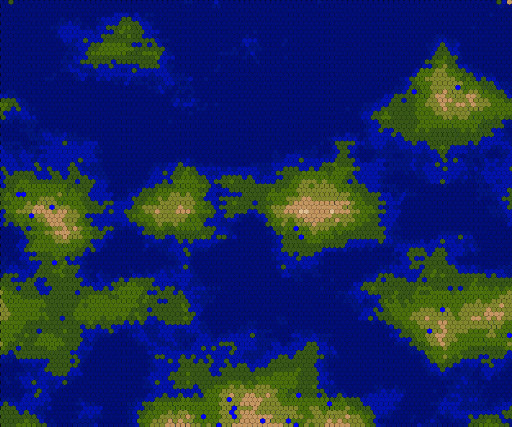

It even works with Kaelan’s generated hexagon maps. Here’s an island hexagon map plus a satellite image of a volcanic island:

This image even produced an interesting three-dimensional effect because of the volcano in the satellite image.

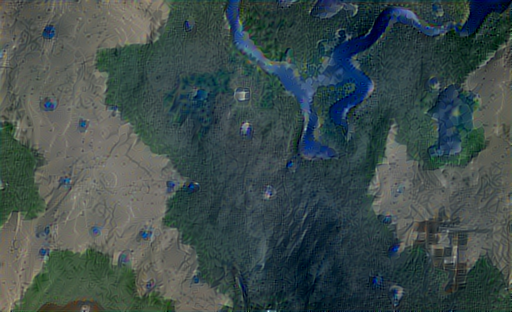

By the way, this also works with minecraft maps. Here’s a minecraft map I found on the internet plus a satellite image from Google Earth:

No fancy texture packs or 3-D rendering needed :).

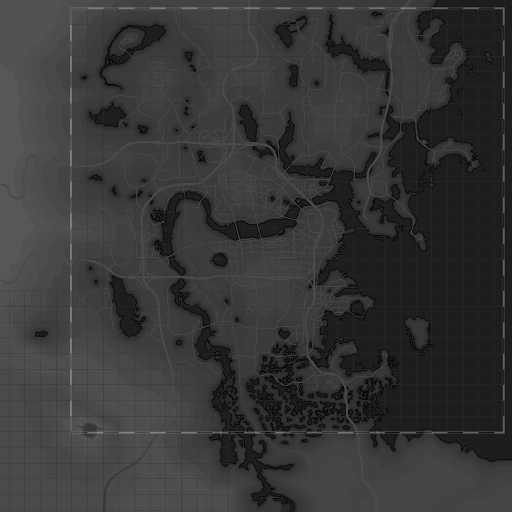

Here is the Fallout 4 grayscale map plus a satellite image of Boston:

Unfortunately, it puts the built-up dense part of the city in the wrong part of the geographic area. But, this is understandable since we gave the algorithm no information on where that is on the map.

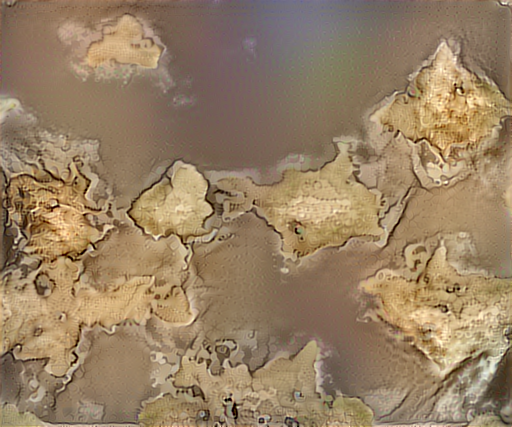

We can also make the generated terrain maps look like old hand-drawn maps using neural-style. With Kaelan’s terrain map as the content and the in-game Elder Scrolls IV Oblivion map of Cyrodiil as the style we get this:

It looks cool, but the water isn’t conveyed very clearly (e.g. makes deep water look like land). Neural-style seems to work better when there is lots of color in both images.

Here is the output of the hex terrain plus satellite map above and the Cyrodiil map which looks a little cleaner:

I was interested to see what neural-style could generate from random noise, so I rendered some clouds in GIMP and ran it with a satellite image of Mexico City from Google Earth (by the way, I’ve been getting high quality Google Earth shots from earthview.withgoogle.com).

Not bad for a neural net without a degree in urban planning.

I also tried generating on random noise with a satellite image of a water treatment plant in Peru

Applications: More Fun

For fun, here are some other outputs that I liked.

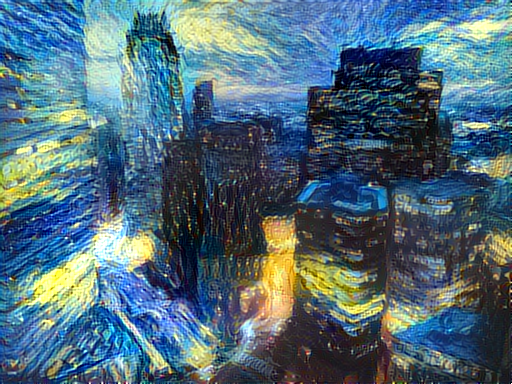

My photo of Boston’s skyline as the content and Vincent van Gogh’s The Starry Night as the style:

A photo of me (by Aidan Bevacqua) and Forrest in the end of Autumn by Caspar David Friedrich:

Another photo of me by Aidan in the same style:

A photo of me on a mountain (by Aidan Bevacqua) and pixel art by Paul Robertson

![]()

A photo of a park in Copenhagen I took and a painting similar in composition, Avenue of Poplars at Sunset by Vincent van Gogh:

My photo of the Shenandoah National Park and this halo graphic from GMUNK (GMUNK):

A photo of me by Aidan and a stained glass fractal:

Same photo of me and some psychedelic art by GMUNK

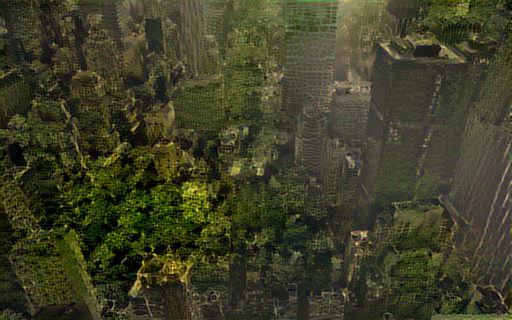

New York City and a rainforest:

Kowloon Walled City and a National Geographic Map:

A photo of me by Aidan and Head of Lioness by Theodore Gericault:

Photo I took of a Norwegian forest and The Mountain Brook by Albert Bierstadt:

Limitations

I don’t have infinite money for a GTX Titan X, so I’m stuck with using OpenCL on my more-than-a-few-generations-old AMD card. It takes about a half-hour to generate one 512x512 px image in my set-up (which makes the feedback loop for correcting mistakes very long). And sometimes the neural-style refuses to run on my GPU (I suspect it runs out of VRAM), so I have to run it on my CPU which takes even longer…

I am unable to generate bigger images (though the author has been able to generate up to 1920x1010 px). As the size of the output increases the amount of memory and time to generate also increases. And, it’s not practical to just generate thumbnails to test parameters, because increasing the image size will probably generate a very different image since all the other parameters stay the same even though they are dependent on the image size.

Some people have had success running these neural nets on GPU spot instances in AWS. It would be certainly cheaper than buying a new GPU in the short-term.

So, I have a few more ideas for what to run, but it will take me quite a while to get through the queue.